SCENARIO

Facemasks are here to stay. Due to the Covid-19 pandemic wearing masks has become a common practice all around the world. Most likely, they will remain part of our everyday life. Even after the pandemic will be under control, it will become an essential part of our clothing, just like socks and jackets.

While lowering the risk of droplet infections, one problem with masks is that they cover most parts of the face, which makes non-verbal communication more difficult.

Facial expressions constitute an essential part of our communication and play a crucial role in how we interact with others, continuously receiving wordless signals that allow us to communicate naturally and empathically. Not seeing if the person next to you is smiling, looking angry or annoyed, makes communication much harder.

Facial expressions constitute an essential part of our communication and play a crucial role in how we interact with others, continuously receiving wordless signals that allow us to communicate naturally and empathically. Not seeing if the person next to you is smiling, looking angry or annoyed, makes communication much harder.

CONCEPT

The prototype suggests an alternative to detect facial expressions while wearing a facemask and proposes a wearable device that produces a visual representation of the covered expressions to help people communicate easier. The prototype uses muscle sensors were used to distinguish facial expressions, e.g., sadness, happiness, and anger.

The readings are then translated into visual outputs displayed with light in front of the facemask. This system creates a new visual language that is intuitively understandable to replace non-verbal communication.

The readings are then translated into visual outputs displayed with light in front of the facemask. This system creates a new visual language that is intuitively understandable to replace non-verbal communication.

HOW IT WORKS?

We used sEMG (surface electromyogram sensors) to measure electrical activity related to muscle stimulation to detect facial expressions.

FACIAL EXPRESSIONS

Every facial expression has a different set of facial muscles and actions involved. In addition, most facial expressions include two or three different muscles, while the same muscle is often used during other facial expressions.

DISPLAY OUTPUT

To visualize the corresponding facial expression, we used a facemask with a layer of optical fiber on the outside. The optical fiber can be illuminated with LEDs placed on the inner side of the mask. These LEDs are connected to a microcontroller to be programmed to change their color, intensity, dimmed, blink, etc.

The colors used to display the different emotions are based on the “Wheel of Emotion” by Robert Plutchik (1980). In addition to the color, various dimming degrees and brightness have been added to make the expression even more understandable.

PROTOTYPE

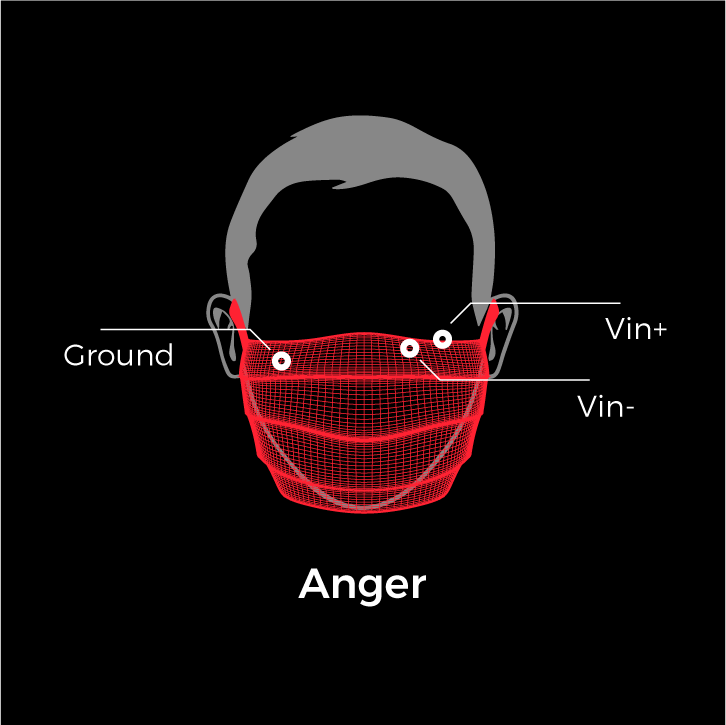

For the prototype, electrodes have been attached directly to the mask regarding the muscles' position for reading expressions of happiness, anger, and sadness—a velcro strap attached to the inside allowed to modify the sensor position to get the required readings.

ELECTRODE PLACEMENT

ACKNOWLEDGEMENTS

This project was developed with Julius Heinemann during the exchange semester 2020 at the Department of Interaction Design at the NTUT (National Taipei University of Technology) Taiwan.

The authors express gratitude to Prof. Chenwei Chiang. For supporting this project while executing prototype and research during the class Tangible Interaction Design.

The authors express gratitude to Prof. Chenwei Chiang. For supporting this project while executing prototype and research during the class Tangible Interaction Design.

For further detailed information about the development of this project, you can review this paper at the following [LINK].